Overview

This project introduces a scalable paradigm for supervised style transfer by reversing the stylization process.

Instead of directly learning to stylize, we propose to learn to destylize, i.e., removing stylistic elements from artistic images to recover their natural counterparts. This enables the construction of authentic, pixel-aligned training pairs at scale.

Key insight

Authentic supervision from real artworks is critical for high-fidelity style transfer.

Motivation

Style transfer aims to render an image in a target artistic style while preserving its semantic content. However, it remains fundamentally ill-posed due to the absence of definitive ground-truth stylization pairs.

1) Existing methods often start from handcrafted losses, which are simple to deploy, but they usually provide weak and indirect supervision for style faithfulness.

2) Feature-statistics-based transfer can capture global appearance trends as a lightweight prior, yet it frequently causes inaccurate style representation and local artifacts.

3) Data-centric pipelines with pseudo stylized pairs offer larger training scale, but they still suffer from content leakage and unreliable pseudo-supervision signals.

Even recent data-centric methods are still bounded by the quality ceiling of pseudo supervision generated by existing models.

Key idea: learn stylize via destylize

Instead of synthesizing stylized images, we start from real artworks and remove style to recover natural content.

1) Input: destylized image (content).

2) Target: original artistic image (style).

3) This formulation yields authentic supervision, improved generalization, and elimination of pseudo-supervision artifacts.

Method: DeStylePipe

We propose DeStylePipe, a progressive multi-stage destylization framework.

Stage 1: Global general destylization

- Universal instruction-based destylization

- Handles simple styles efficiently

Stage 2: Category-wise instruction adaptation

- Style-aware prompt rewriting

- Improved performance on complex styles

Stage 3: Specialized model adaptation

- Fine-tuning for difficult styles (e.g., clay, origami)

At each stage, outputs are filtered for quality control.

DestyleCoT-Filter

We introduce DestyleCoT-Filter, a Chain-of-Thought-based filtering mechanism.

Content preservation

- Region-level object identification

- Object consistency checking

Style removal

- Style attribute decomposition

- Residual style analysis

Filtering rule

- Content score ≥ 4

- Style removal score ≥ 4

This ensures high-quality supervision data.

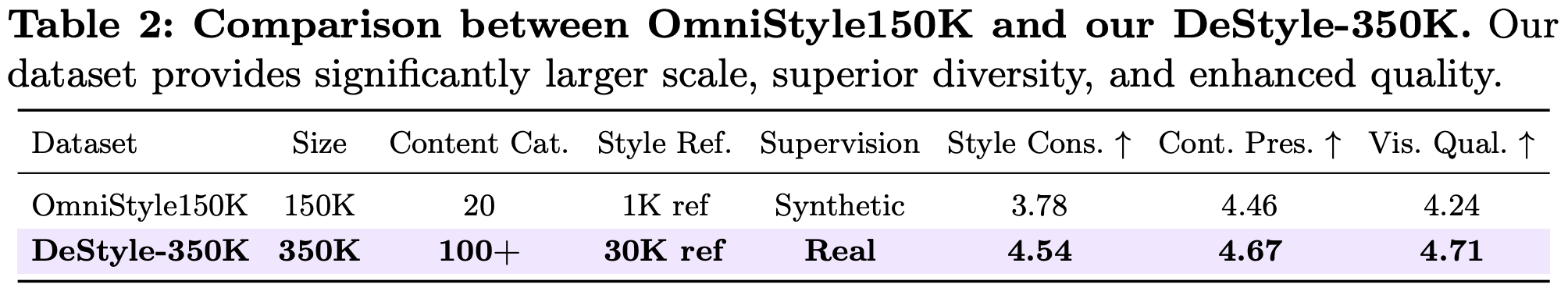

Dataset: DeStyle-350K

We construct DeStyle-350K, a large-scale dataset:

- 350K triplets

- 500+ styles

- 100+ content categories

Each triplet:

- destylized image (content)

- reference image

- style image (target)

This dataset provides authentic supervision compared to synthetic alternatives.

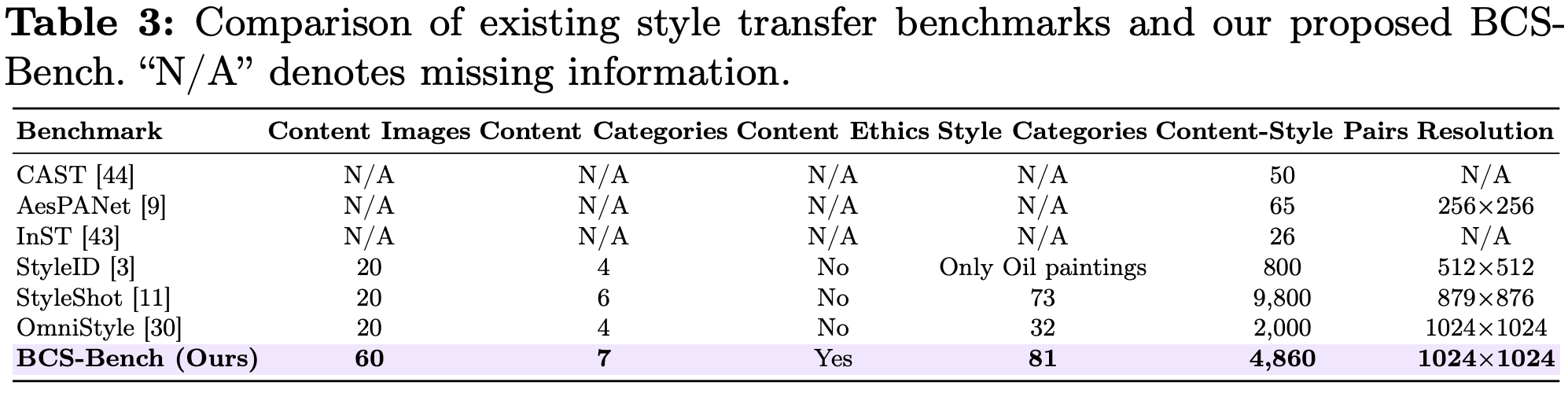

Benchmark: BCS-Bench

We propose BCS-Bench, featuring:

- 81 styles × 60 contents

- 7 semantic categories

- 1024 resolution

- 4,860 pairs

It ensures balanced evaluation across content and style diversity.

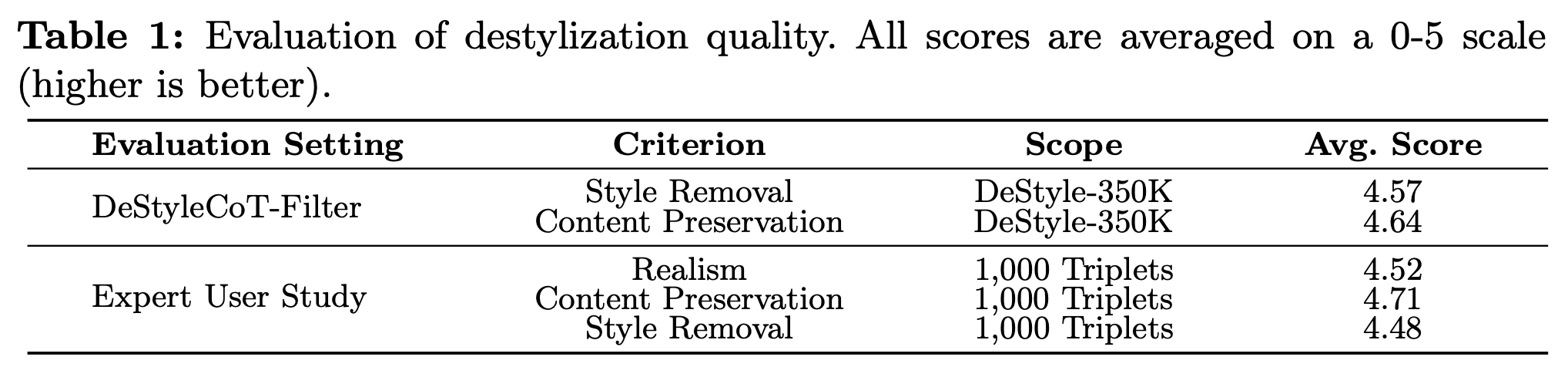

Destylization results

Our method:

- removes diverse artistic styles

- preserves fine-grained structures

- maintains pixel-level alignment

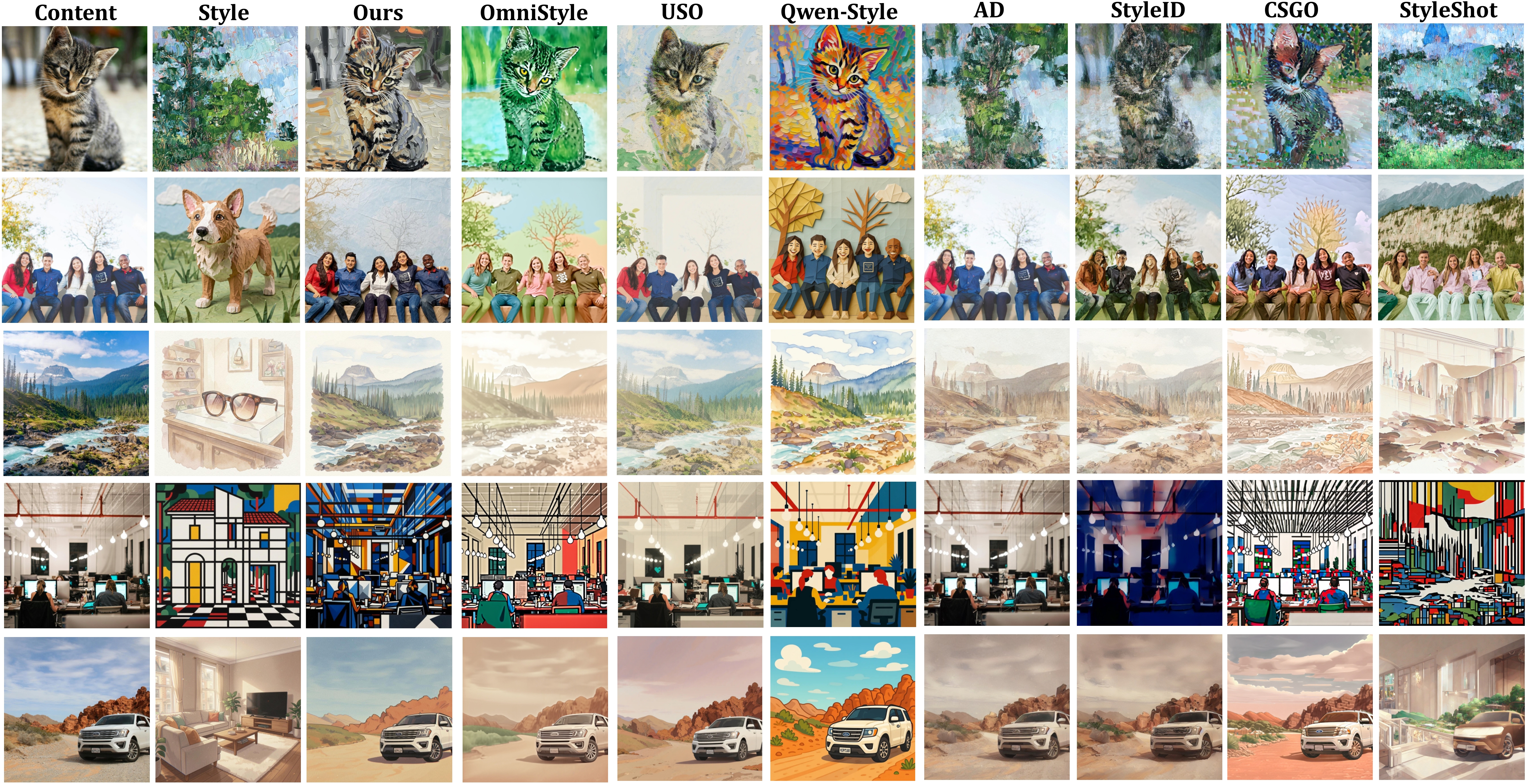

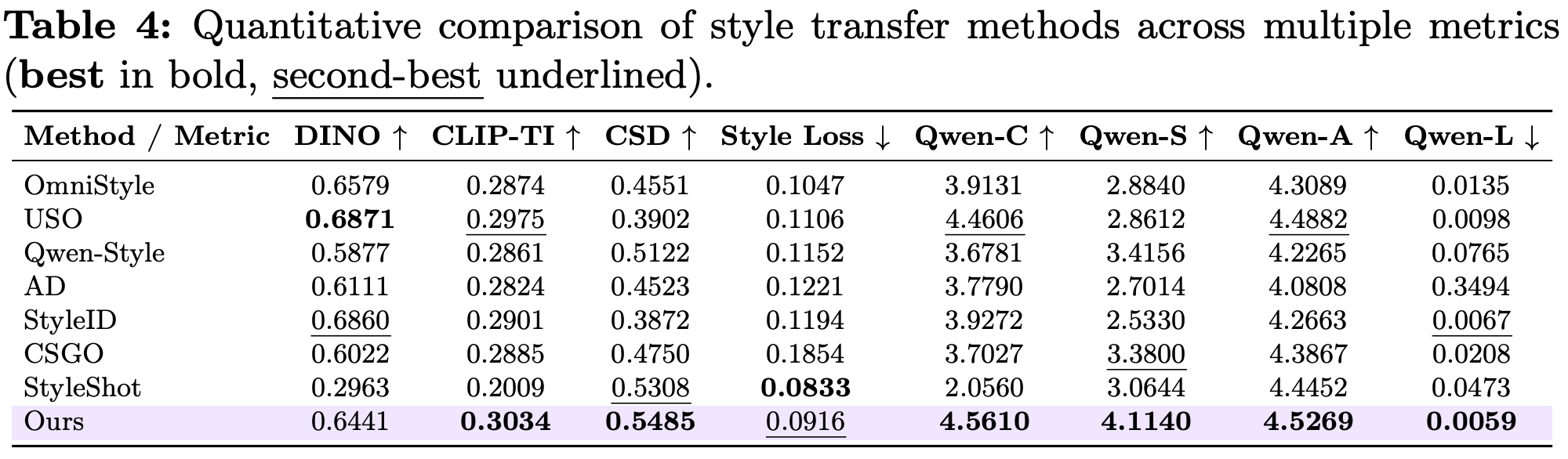

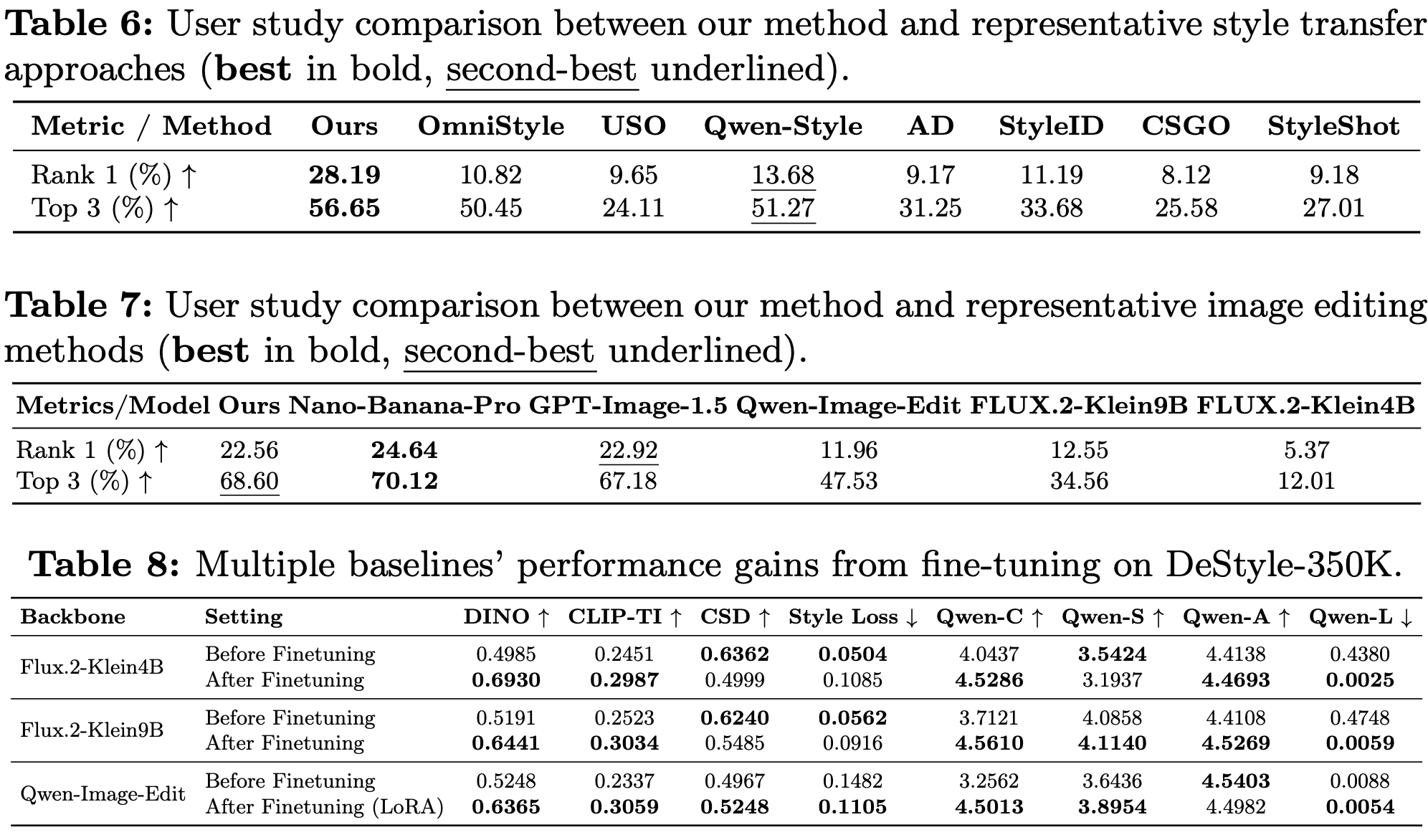

Comparison with style transfer methods

Our method:

- avoids content leakage

- preserves identity and structure

- achieves stronger style fidelity

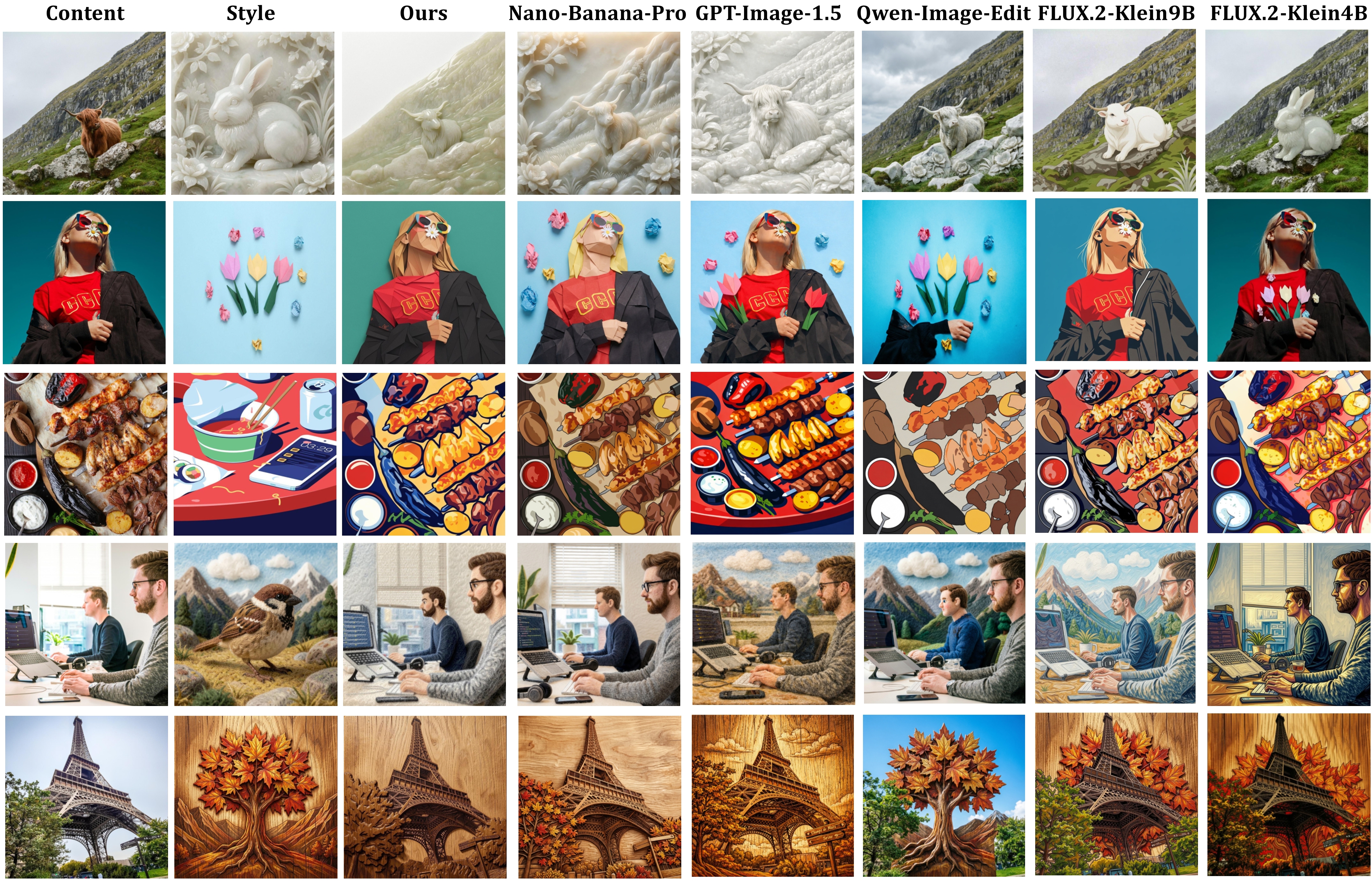

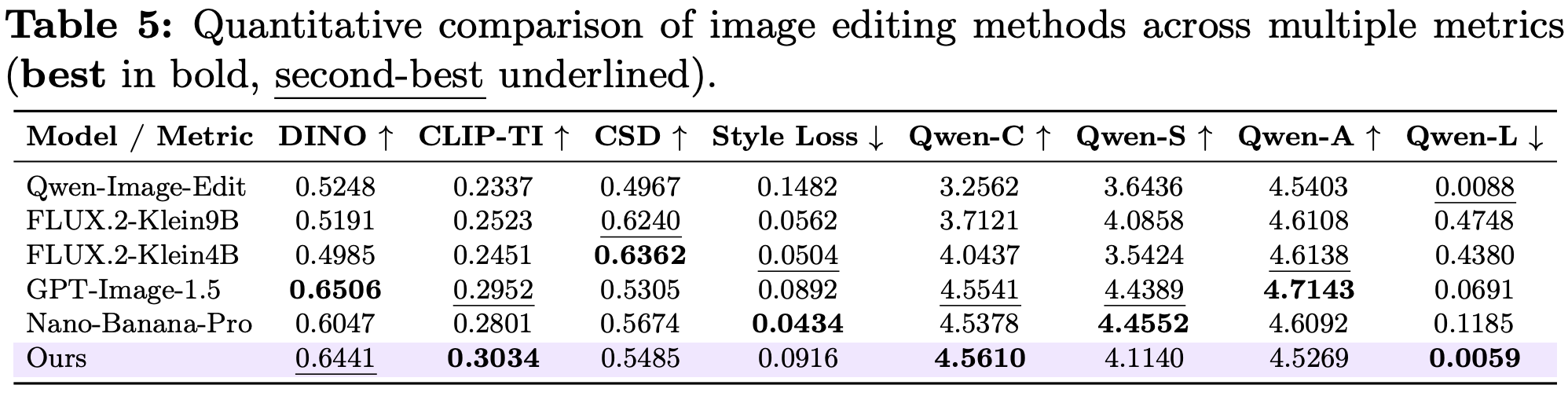

Comparison with image editing models

Compared to existing models:

- better content preservation

- less semantic leakage

- more accurate style transfer

Quantitative results

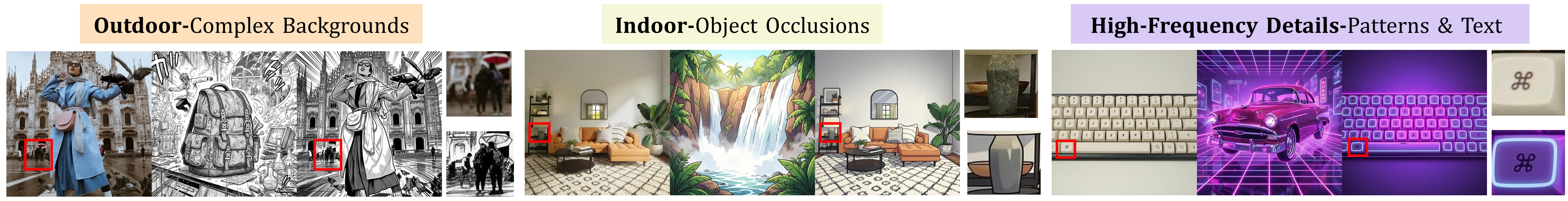

Robustness

Our method maintains:

- structural consistency in complex scenes

- robustness to occlusion and fine details

Ablation: multi-stage refinement

- Stage 1: incomplete removal

- Stage 2: improved results

- Stage 3: fully natural outputs for complex styles

Conclusion

We introduce a new paradigm for supervised style transfer:

- Learn stylization via destylization

- Build authentic large-scale supervision

- Achieve superior stylization quality

This work demonstrates that destylization is a scalable and reliable solution for style transfer.

More results